Every photo every iPhone takes is thanks, in some small part, to these millions of images, filtered twice through an enormous machine-learning system.īut it’s not just that the camera knows there’s a face and where the eyes are.

That trick made running it on a phone possible. The model was too big, though, so they trained a smaller version on the outputs of the first. The company trained one machine-learning model to find faces in an enormous number of pieces of images. Nothing creepy about this Mug Life image at all (Alexis Madrigal / Mug Life)Īll this work, which was incredibly difficult a decade ago, and possible only on cloud servers very recently, now runs right on the phone, as Apple has described. All these data are available to app developers, which is one reason for the proliferation of apps to manipulate the face, such as Mug Life, which takes single photos and turns them into quasi-realistic fake videos on command. Finally, the face and rest of the foreground are depth mapped, so that a face can pop out from the background. Then, the phone fixes on the face’s “landmarks” to know where the eyes and mouth and other features are. Apple has literally created new silicon chips to be able to, as the company promises, consider your face “even before you shoot.” First, there’s facial detection. They’ve dedicated enormous resources to taking pictures of faces. The phone manufacturers and app makers seem to agree that selfies drive their business ecosystems. And to Mirzoeff, there is no better example of the “new networked, urban global youth culture” than the selfie. FILTERS FOR PHOTOS TO MAKE ME LOOK BETTER HOW TOIn How to See the World, the media scholar Nicholas Mirzoeff calls photography “a way to see the world enabled by machines.” We’re talking about not only the use of machines, but the “ network society” in which they produce images.

And probably a lot more important to the future of technology companies. It is ubiquitous and low temperature, but no less effective. “Put differently, we’re not so far from the collapse of reality.” Deepfakes are one way of melting reality another is changing the simple phone photograph from a decent approximation of the reality we see with our eyes to something much different. The stakes can be high: Artificial intelligence makes it easy to synthesize videos into new, fictitious ones often called “deepfakes.” “We’ll shortly live in a world where our eyes routinely deceive us,” wrote my colleague Franklin Foer.

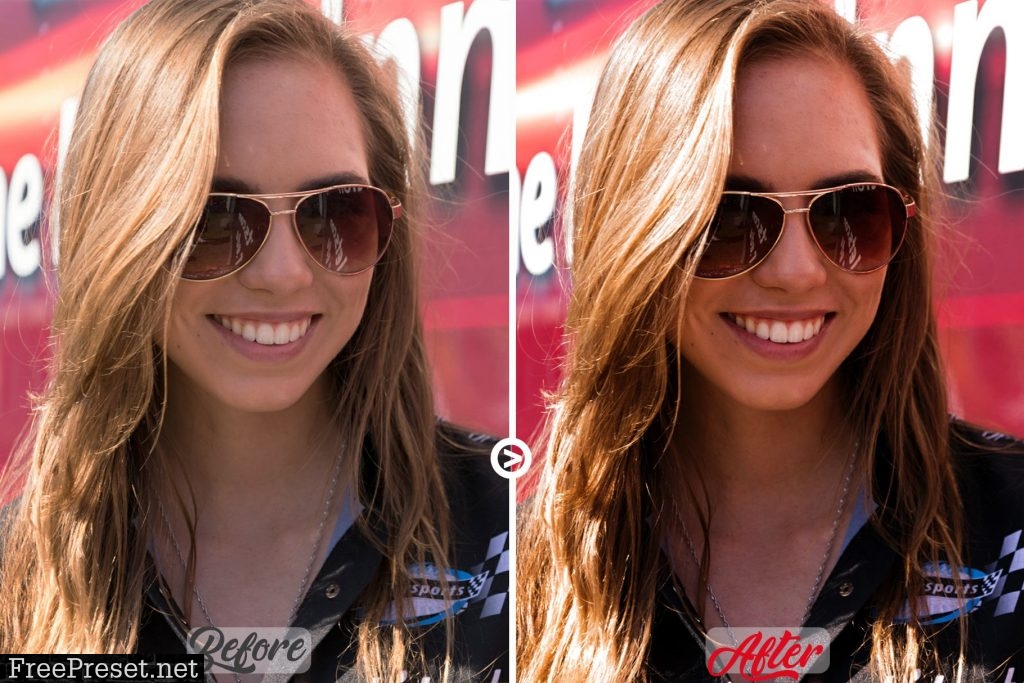

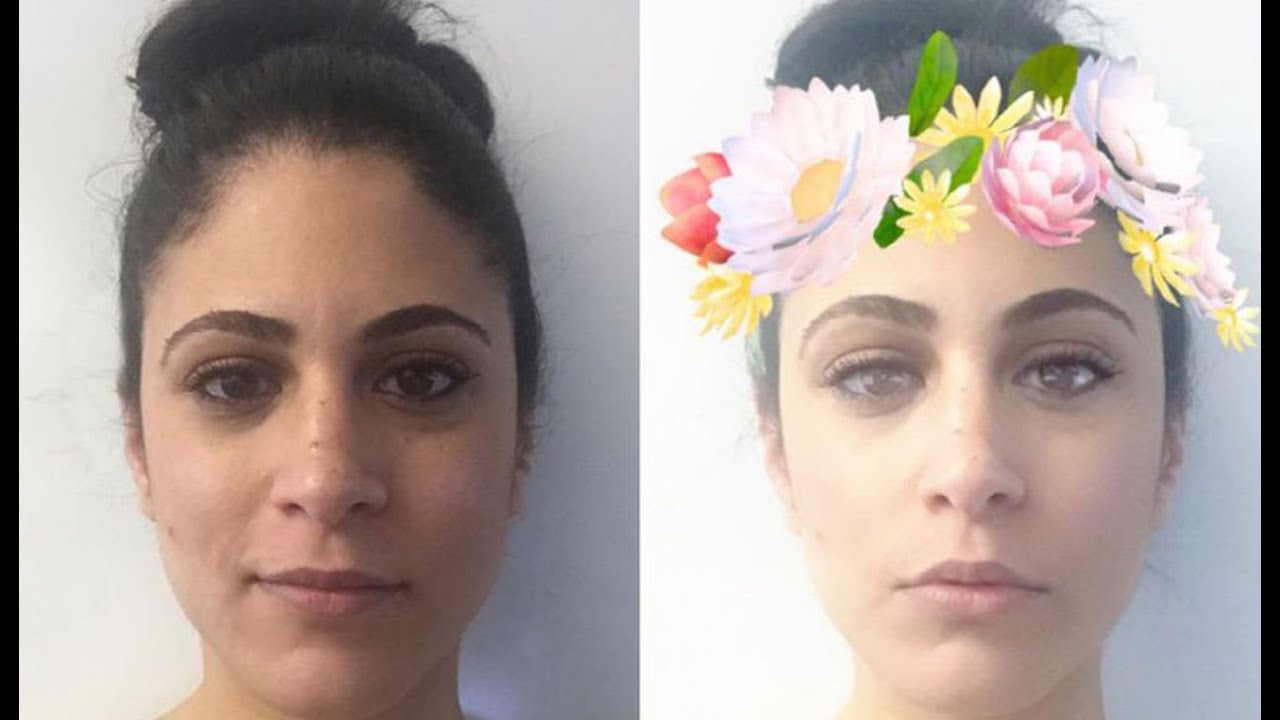

Using this other information as well as an individual exposure, the computer synthesizes the final image, ever more automatically and invisibly. Now, under the hood, phone cameras pull information from multiple image inputs into one picture output, along with drawing on neural networks trained to understand the scenes they’re being pointed at. All cameras capture information about the world-in the past, it was recorded by chemicals interacting with photons, and by definition, a photograph was one exposure, short or long, of a sensor to light. What’s changed is this: The cameras know too much. But they are also not pictures as they were understood in the days before you took photographs with a computer. Snap a selfie, and that’s what you get.įaceApp adding substantially more George Clooney to my face than actually exists (Alexis Madrigal / FaceApp) What makes the iPhone XS’s skin-smoothing remarkable is that it is simply the default for the camera. Other phones have a flaw-eliminating “beauty mode” you can turn on or off, too. FILTERS FOR PHOTOS TO MAKE ME LOOK BETTER UPGRADEIn the smartphone era, apps from Snapchat to FaceApp to Beauty Plus have offered to upgrade your face. People have always sought out good light. This isn’t a totally new phenomenon: Every digital camera uses algorithms to transform the different wavelengths of light that hit its sensor into an actual image. I wasn’t so much “taking pictures” as the phone was synthesizing them. Over weeks of taking photos with the device, I realized that the camera had crossed a threshold between photograph and fauxtograph. Speaking as a longtime iPhone user and amateur photographer, I find it undeniable that Portrait mode-a marquee technology in the latest edition of the most popular phones in the world-has gotten glowed up. He’s not the only one who has noticed the effect, either, though Apple has not acknowledged that it’s doing anything different than it has before. “That’s weird … I look like I’m wearing foundation.” “I do not look like that,” he said in a video demonstrating the phenomenon. Hilsenteger compared it to a kind of digital makeup. FILTERS FOR PHOTOS TO MAKE ME LOOK BETTER SKINWhen a prominent YouTuber named Lewis Hilsenteger (aka “ Unbox Therapy”) was testing out this fall’s new iPhone model, the XS, he noticed something: His skin was extra smooth in the device’s front-facing selfie cam, especially compared with older iPhone models.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed